For the last year I have been running Microsoft Hyper-V on Server 2019. Due to mounting issues I moved over to VMware vSphere, this first post will discuss my Hyper-V setup and feedback about it; then the next post will speak about my migration and new setup. When I started building out my home setup I was studying to take a Windows Server certification for work, with that and about half of the virtual machines I had at home were Windows, Hyper-V was the choice for hypervisor. One feature that stood out to me was Dynamic Memory on Hyper-V because my home setup was not that large; as well as the automatic virtual machine activation (Microsoft Doc). Later, I was attempting to run Storage Spaces Direct (S2D, SSD would be a confusing acronym so Storage Spaces Direct goes by S2D), except my setup was not supported, which made me run a… not recommended configuration… more on that soon; and I kept having issues around the Hyper-V management tools. I decided it was time to migrate from Hyper-V and S2D to VMware vSphere and vSAN.

(Please note for feedback I am discussing Windows Server 2019 here, and VMware vSphere 7.0)

Selecting a Hypervisor

I wanted to briefly go over a bit more of my thought process when selecting a Hypervisor. I already mentioned some of the reasons it made sense, but I also wanted to mention more of my thoughts. I started the search looking for a Type-1 Hypervisor awhile ago, I was going to be running on a Intel NUC with a few Windows and Linux VMs. Being a homelab, I thought I would look at free options.

Having used Proxmox years ago with a ton of issues I wanted to steer clear of it (looking back this probably was not fair, it was several years since I last used it and I believe it has gotten better); I had also used CitrixHypervisor (formerly XenServer) with many issues including a storage array killing itself randomly in one reboot. One of the requirements I gave myself was to have a real management system, I did not want to run KVM on random Linux hosts. That brought me to the 2 big ones, VMware and Microsoft. VMware has a lot of licensing around different features, but was the system I knew better. I could get a VMware Users group membership for homelabs, and that would take care of the licensing. On the other hand, with me studying for Windows Server tests, and the book speaking of different Windows Server and Hyper-V features, I thought I would give it a try. The following are things I liked about it, and then what turned me away from it.

Great Things About Hyper-V

I want to give a fair overview of my year plus running Hyper-V. There are some great features; dynamic memory allows you to run modern OSes with a upper and lower limit on memory, and then most of the time while the VMs are idling your memory footprint is very low. Another great feature is the earlier mentioned automatic activation, as long as your Hyper-V host (Windows Server 2019 Standard or Datacenter not the free Hyper-V Server) is activated, it can pass that activation to your guests and allow you to run Server 2012+. All of the services are running on Windows, out of the box you get all the benefits there; such as creating group policies for your servers and using that to do a lot of your fleet management. I recently have started using Windows Admin Center, which gives you a single view on all your Windows systems and allows you to update them all in one place. Hyper-V works well if you have a single node, and want to do basic things with it; when you move to doing clustering and advanced storage Hyper-V starts to give you a lot of issues.

General Hyper-V Issues

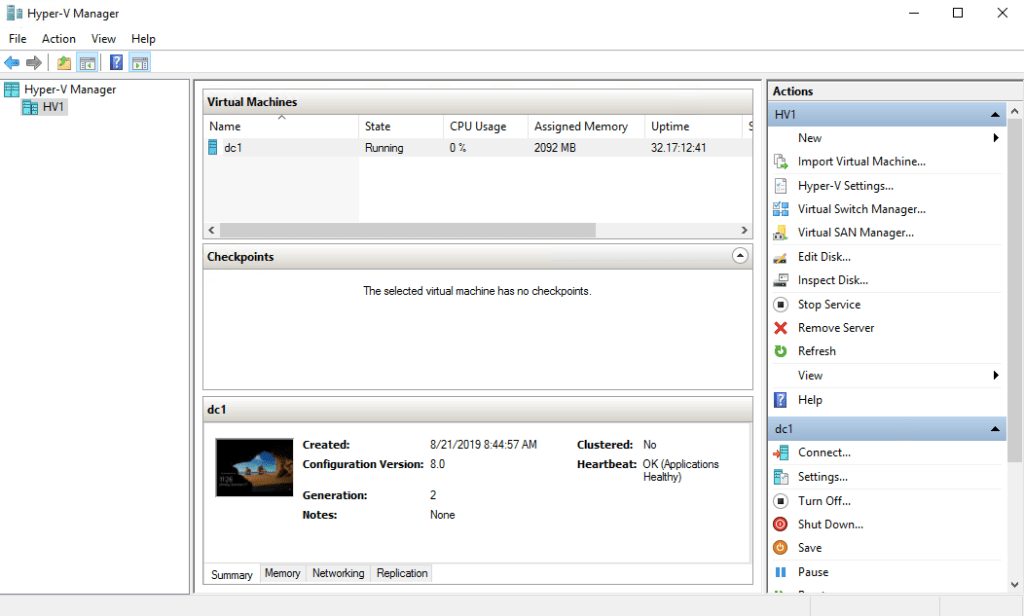

To dive more into the woes I was having with Hyper-V, some of it is my own doing, some of it is the tools. Even before I was running S2D, I was running several Hyper-V boxes each with its own storage. I will go into my issues with S2D soon. Hyper-V’s management tools are not good. You have several options on how you will manage the systems, the first and easiest is Hyper-V Manager. This is a simple program that allows you to 1-1 manage a Hyper-V system. I mean 1-1 because if you have VMs that are part of a failover cluster, you can connect to them here to view them but that is it. Hyper-V Manager only allows you to manage VMs that live on one hypervisor with no redundancy; for casual use, it works. I use it for my primary AD host because I don’t want anything fancy going on with that box, when I need to start everything from scratch, I need AD and DNS to come up cleanly.

Maybe you have outgrown the one off server management and want to move your systems into a cluster. Now its time for Failover Cluster Manager. You add all the servers into a Failover Cluster together, and get through the checks you have to pass. Then there is a wizard to migrate your VMs from Hyper-V Manager into Failover Cluster Manager. One requirement to do this is to have storage that every box in the cluster can use, either S2D or iSCSI (you can do things like Fibre Channel, but I was not going to do that). I used the tool and the VM said all its files were moved onto shared iSCSI storage that all the machines could use. Should be good right? Things seem to be working. Then I would move certain VMs to other hosts and it would fail, just some of them. It came down to either a ISO, or one of the HDD hibernation files, or checkpoints (Microsoft version of Snapshots) being on one of the hosts, and the UI NOT mentioning this. Thus, when the VM tried to load on another system, a file it needed was not there and it could not load. Failover Manager is also fairly simplistic and doesn’t not give you a ton of tools. Again, Windows Admin Center adds some nice info on a standard cluster, but it is not fantastic; leaving you to dig through Powershell to try to manage your Failure Cluster.

On occasion the Virtual Machine Manager service that is in charge of managing the VMs, and gives the interface to monitor, modify, and access the VMs would lock up. Hyper-V Manager and Cluster Manager would show no status for the VMs, and I would have to restart the service. These minor issues would stack up over time.

To manage Hyper-V remotely (meaning from any other system) you need to setup Windows Remote Management, winrm. This system by default uses unencrypted HTTP. Encryption can be turned on with a few commands in the command line, but it creates a cert based on your hostname and IP address. If you have more than one IP, OR you are in a Failover Cluster this means you will be spending a lot of time customizing these certificates because it will just get a cert for your host, and when that node because the Failover Cluster manager, it needs that virtual IP and hostname in the cert. I had to create different certs for that virtual interface and put them on the different nodes manually, there are people in the Microsoft support forum talking about this. Here is an example incase it helps anyone of creating a cluster listener after manually creating a cluster cert.

winrm create winrm/config/listener?Address=IP:192.168.3.8+Transport=HTTPS ‘@{Hostname=”home-cluster.home.ntbl.co”;CertificateThumbprint=”BFCDE6C85A0B12426A44BC3F44236313317C63CC”;ListeningOn=”192.168.3.8″}’

There also is a System Center Manager that is another package you can purchase from Microsoft to manage Hyper-V. Having dealt with System Center to manage Windows systems at work, I did not want to touch that at all. Hyper-V has a lot of things going for it, and the underlying code running VMs works well 99% of the time. I wish Microsoft put more time to grow the tools you use for managing it. Parts of the process like setting up networking on different nodes could be much smoother, in comparison with VMware Distributed Switching. I installed one of the systems I had on Windows Server Core (no user GUI) to learn more about that. If your primary interface needs a VLAN for management, this a painful experience. You have to create the Hyper-V virtual switch and attach your management interface to it and assign the VLAN all from within Powershell. If you need to do it, this is a good resource. Thing like this, and the winrm issues, make Hyper-V feel unpolished even after being in the market for years.

S2D Issues

I wanted to put these systems into a failover cluster, allowing them to move VMs between each other as needed, except then I needed shared storage. I attempted to use iSCSI from my FreeNAS box; alas, with 7, old, spinning drives, the speed was not great with more than a few VMs. Then I thought I had some spare SATA SSDs and I could use S2D to do shared storage. For those who have not attempted to setup S2D, your hard drives have to either be NVME, or an internal HDD controller. The system will refuse to work on any configuration that it does not like. With most of my systems being small form factor PCs, and I am just using a few SATA drives I got USB 3.1 4 bay SATA enclosures. Not optimal but decent speed and it allows me to add a good number of drives to each system without a large expense of a full RAID or SAS controller.

S2D refused to work with these drives. I believe it came down to the controller the USB drives was using and it not signaling something the systems wanted. The drives showing up as Removable also made Windows refuse. There are commands you can run like below that will enable more disk types to work, but I could not get my dries to show up.

| (Get-Cluster).S2DBusTypes=4294967295 |

Then I had an idea, an evil and terrible and great idea. I created 3 VMs, one on each Hyper-V box, then gave the 3 disks of each server to the VM in full. Now I had 3 VMs, each with 3 drives (and a separate OS drive) to run S2D. To the VM OS, it looked like they had 3 SCSI HDDs that was happy to use for S2D. I put these three Windows Servers into a failover cluster together, and setup S2D. Overall setup was not too bad. If you have Windows Admin Center configured it is much easier to setup and use Storage Spaces Direct than the GUI in Windows Server. There are a ton of Powershell commands for configuring S2D and you will probably end up using a bunch of them.

This worked! The systems were in a failover cluster, of their own, and my main failover cluster that controlled the VMs could use it as shared storage. If you use Windows Admin Center you can get nice stats from the Storage cluster about the sync status of the disks. Every time one of the storage nodes reboots, the cluster needs to re-sync itself. There are different RAID levels you can set the S2D setup to, I set it to have 2 additional copies of each set of data, this means each node has a full copy of the data; this uses a lot of space but i can have 1 node run everything (which ended up being overkill).

This setup ran for a while decently, other than the small VM overhead, it was fast and worked. The issues arose when the second Tuesday of the month came around and I needed to do patching. The storage network was sitting on top of the Hypervisors, and they didn’t really understand that. I often ran into problems where I would shutdown one of the storage nodes to patch it, and patch the host, then the other 2 nodes would lock up or say all storage was lost. This would occur even when preemptively moving who was the main node, and prepping to restart. With storage dropping out from under all the VMs, they would die and need to manually be rebooted or repaired. This made me start to look for a new setup after a few of these months.

All in all, I ended up running for over a year about 5 Windows VMs and 5 Linux VMs on Hyper-V with good uptime. One benefit of Hyper-V is you get the hardware compatibility of Windows, which is vast. The big downside of Hyper-V is the tools around it. At times they seem unfinished, at other times buggy. My next post will be about the migration, and my experience with vSphere 7.0!

Resources

http://woshub.com/configure-storage-spaces-direct-s2d-windows-server-2016/