There can be an alert misfire for Tenable Nessus plugins 137561, 138032, 142002 based on your YUM repo configuration. This leads to 3 medium alerts that should not be there.

Plugins:

- RHEL 7 : OpenShift Container Platform 4.3.25 containernetworking-plugins (RHSA-2020:2443) (Plugin 137561)

- RHEL 7 : OpenShift Container Platform 4.2.36 containernetworking-plugins (RHSA-2020:2592) (Plugin 138032)

- RHEL 7 / 8 : OpenShift Container Platform 4.6.1 package (RHSA-2020:4297) (Plugin: 142002)

If you have a stack that is using podman with RHEL 7 and does not have the default redhat.repo file, then packages are installed that have newer versions in the OpenShift repos. Normally this would be fine, but the Nessus scanner is supposed to check if you have OpenShift repos enabled, and if not then stop and say the latest versions from RHEL 7 OS is good; but the check fails if you are missing the RHEL 7 OS repos. The OS repo HAS to be enabled also, or the check will show as failing. This situation can easily happen if you have an air gapped system or a system on Satellite where you are not using the default repo in redhat.repo. Luckily the baseurl does not matter, as long as you set the name to “rhel-7-server-rpms”, and I put the name= line in there for good measure, then the check will come back clean.

/etc/yum.repos.d/redhat.repo

[rhel-7-server-rpms]

name = Red Hat Enterprise Linux 7 Server (RPMs)

baseurl=file:///opt/rhel_7_x86_64_os/

enabled = 1

gpgcheck = 0

gpgkey = file:///etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-releaseBefore (or after) setting that, you will need to disable the YUM Redhat Subscription Manager plugin, or the next time you run “yum” it will wipe your redhat.repo and reload it from subscription manager. To do this, go to /etc/yum/pluginconf.d/subscription-manager.conf and set “enabled=0”. Also # subscription-manager config --rhsm.manage_repos=0

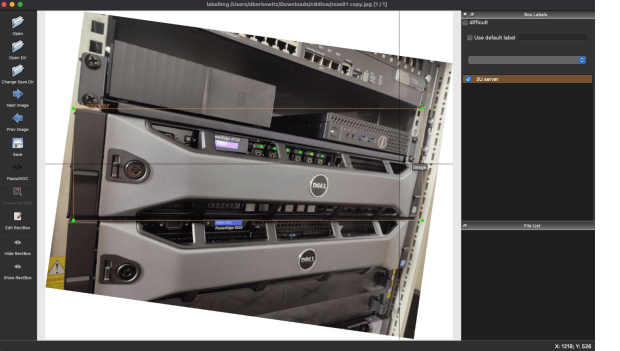

Below are examples of the errors you can see from Nessus.

Remote package installed : containernetworking-plugins-0.8.3-3.el7_8

Should be : containernetworking-plugins-0.8.6-1.rhaos4.2.el7

OR

Remote package installed : runc-1.0.0-69.rc10.el7_9

Should be : runc-1.0.0-81.rhaos4.6.git5b757d4.el7The default thinking may be, it says I need to update to the OpenShift packages; then it makes sense to install the OpenShift repos. And if you go get a Redhat developer account to debug this, you have the OpenShift repos there. That is because the developer account gives you a lot of entitlements including OpenShift, and if you add the OpenShift repos to a bunch of systems, you may be liable to get OpenShift licenses, or get errors because those systems do not have the entitlements. The key is the packages say “.el7_8″/”.el7_9″ instead of “.rhaos4.2”. This is a plugin misclassification, not a need for updates.

Note: The image is a random AI generated one: Stable Diffusion Image, “computer with redhat logo on screen, in a field with mountains and a dinosaur in the background”. I think they are fun.