Preface

I am going to start with some information for those completely new to OpenShift and Kubernetes (shorthand “K8s”), feel free to jump to “Installation Steps for Single Node OpenShift” for steps if you prefer. I will explain why OpenShift, and will have that blurb after the tutorial for those interested. This guide walks you through doing a Single Node OpenShift installation. This should take about 1-2 hours to have a basic system up and running.

In later posts I will go over networking, storage, and the rest of the parts you need to setup. I spoke to some of their engineers, and they were confused when I said this system is not easy to install, and they need to make an easy installation disc like VMware or Microsoft have.

It is worth noting at this point that OKD exists. OKD is the upstream (well moving upstream), open-source version of OpenShift. You are more bleeding edge, but you get MOST of the stack without any licensing. Almost like CentOS was to Red Hat Enterprise Linux, except more upstream than in line. There are areas where that is not true, and other hurdles to use it; I will make another post about setting up OKD, but the main issues are lack of Operators which you get automatically from OpenShift. Operators like LVM for storage or NMstate for network management are more difficult to configure.

Single Node OpenShift vs High Availability

There are two main ways to run OpenShift, the first is SNO; Single Node OpenShift. There is no high availability, everything runs with 1 master node, which is also your worker node. You CAN attach more worker servers to a SNO system, but if that main system goes down, then you lose control of the cluster. The other mode to run in is HA, where you have at least 3 nodes in your control plane. For production you would usually want HA, and I will have an article about that in the future, for now I will just install SNO.

Big Changes to Keep in Mind From VMware

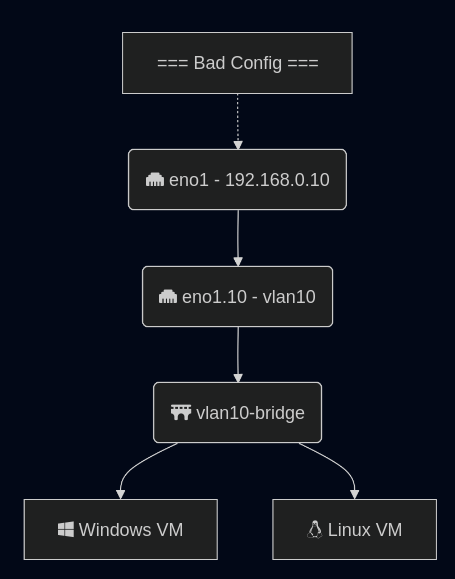

A quick note to all the administrators coming from VMware or other solutions, OpenShift runs on top of CoreOS. An immutable OS based on Red Hat and ostree. The way OpenShift finds out which config to apply to your node is via DHCP and DNS. These are HARD REQUIREMENTS to have setup for your environment. The installation will fail, and you will have endless problems if you do not have DHCP + DNS setup correctly; trust me, I have been there.

K8s Intro 101

For those who haven’t used Kubernetes before (me a few weeks ago), here are some quick things to learn. A cluster has “master” nodes and “worker” nodes, masters orchestrate, workers run pods. Master nodes can also be worker nodes.

OpenShift by default cannot run VMs. We are installing the Virtualization Operator, operators are like plugins, which will give us the bits we need to run virtualization. OpenShift has OpenShift Virtualization Operator, OKD has KubeVirt. OpenShift Virtualization Operator IS KubeVirt with a little polish on it and supported by Red Hat.

Homelab SNO Installation

OpenShift is built to have a minimum of 2 disks. One will be the core OS and the containers that you want to run. The other will be storage for VMs and container data. By default the installer does not support partitioning the disk, forcing you to have 2 disks. I wrote a script that injects partitioning data into the SNO configuration. The current SNO configuration does not seem to have another easy way to add this. The script: Openshift-Scripts/add_parition_rule.sh at main · daberkow/Openshift-Scripts, needs to be run right after “openshift-install”, Step 18. It is run with “$ ./add_parition_rule.sh ./ocp/bootstrap-in-place-for-live-iso.ign ./ocp/bootstrap-in-place-for-live-iso-edited.ign”, then “./ocp/bootstrap-in-place-for-live-iso-edited.ign” is used for Step 20.

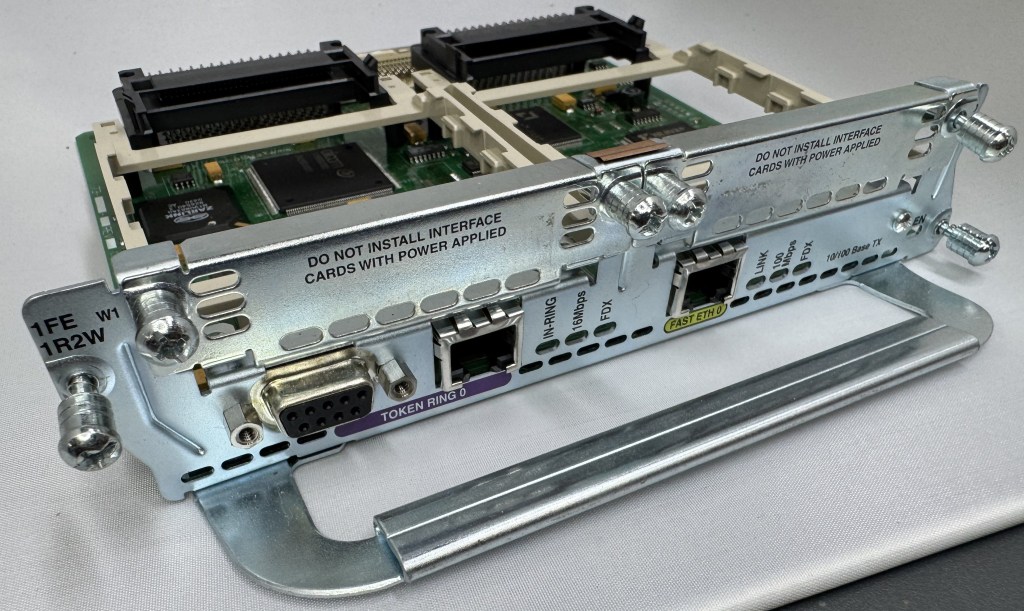

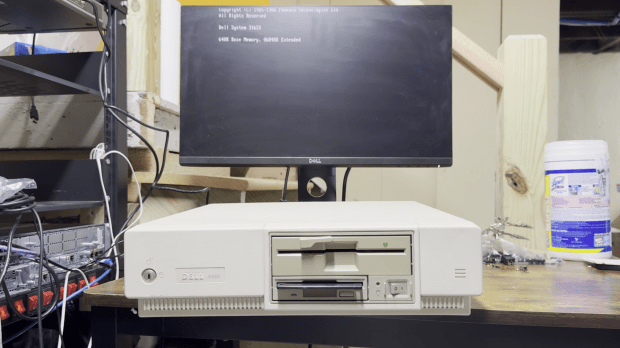

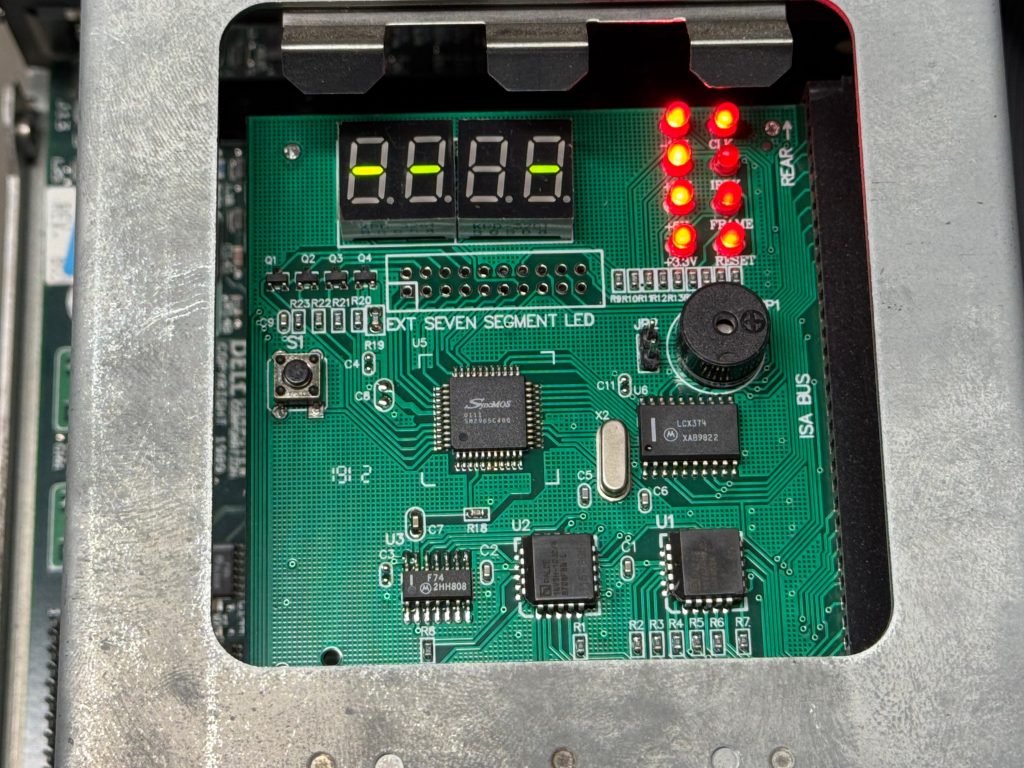

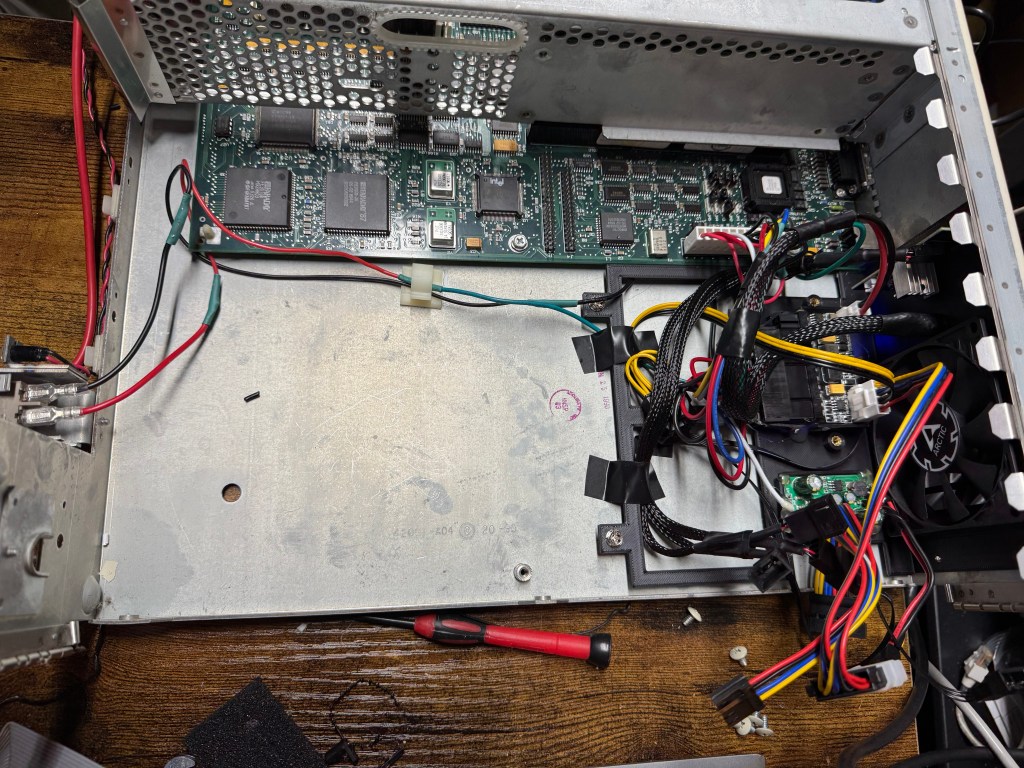

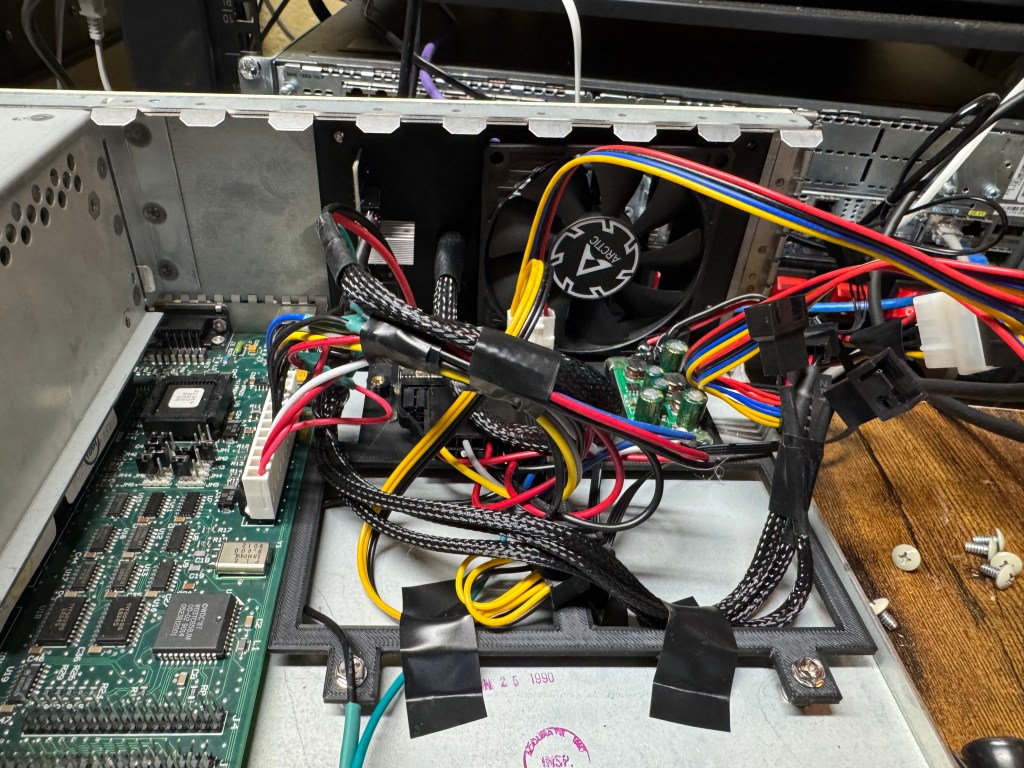

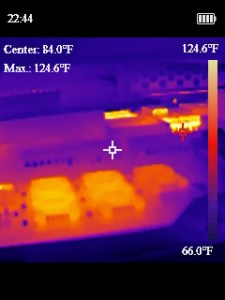

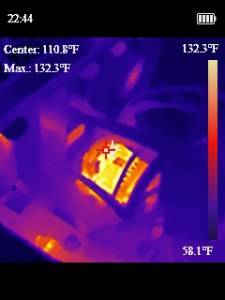

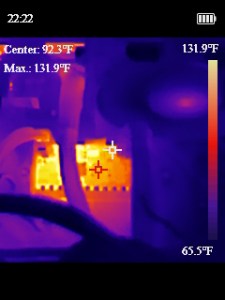

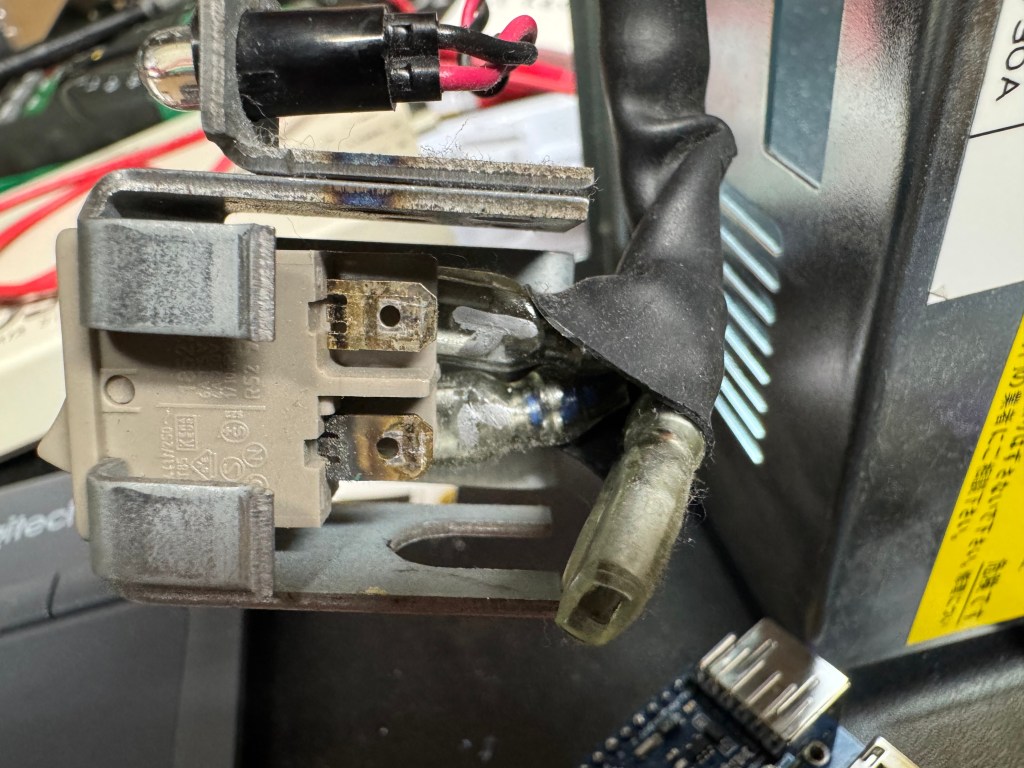

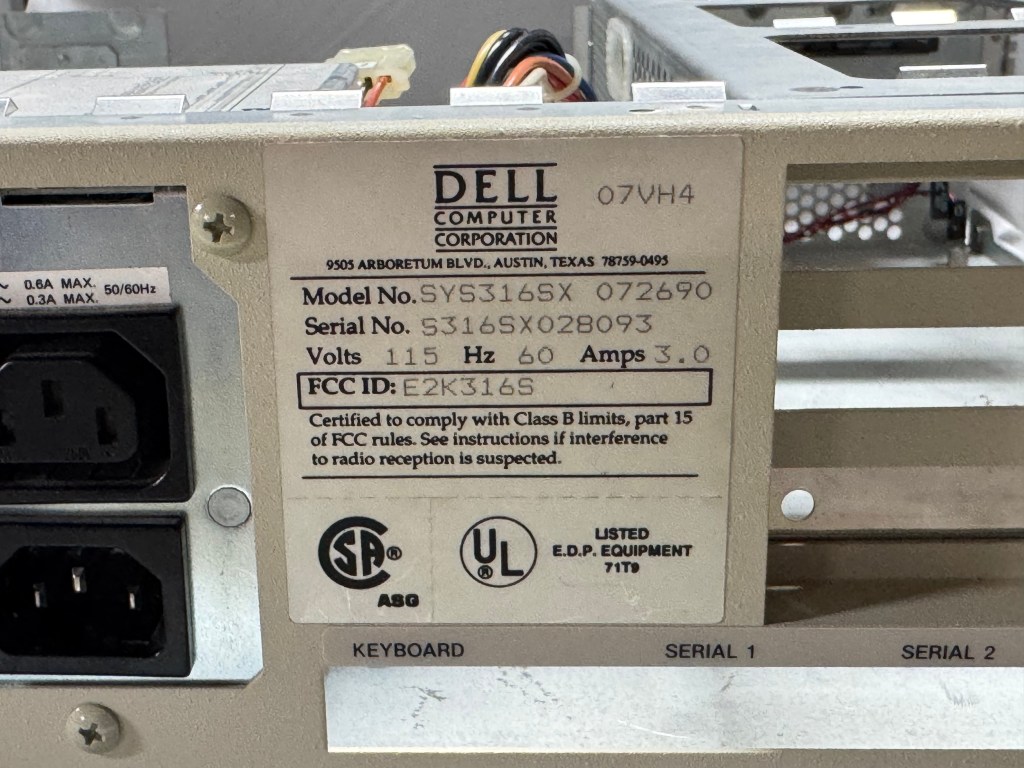

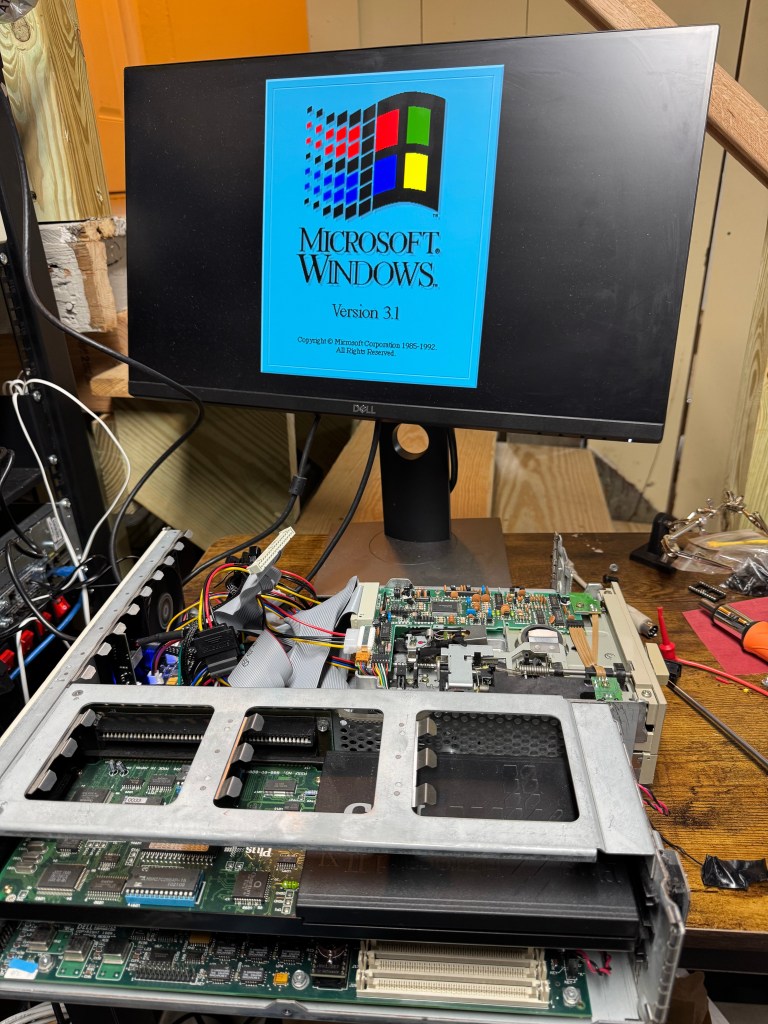

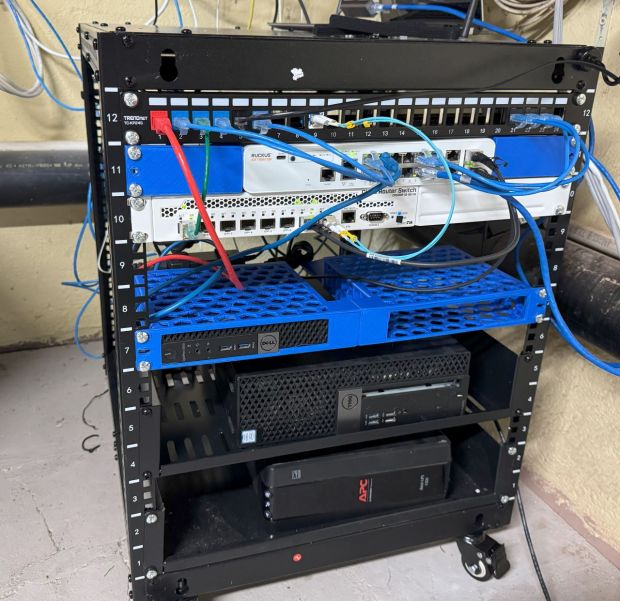

I am running on a Hp ProDesk 600 G5 Mini with an Intel 9500T, 64GB of RAM, and a 1TB NVMe drive. You need any computer you can install an OS onto with at least 100GB of storage and probably 32GB of RAM. Red Hat CoreOS is a lot more accepting of random hardware than VMware ESXi is.

Installation Steps for Single Node OpenShift

OpenShift has several ways to do an installation, you can use their website and do the Assisted installer or create an ISO with all the details baked in, this time we will go over how to do it with creating a custom ISO with an embedded ignition file.

The following steps will be for a Mac or Linux computer. The main commands you will use interact with your cluster are `kubectl` and `oc`; `oc` is the openshift client, and a superset of the features in the standard `kubectl` command. Those tools work on Windows and have builds. The `openshift-installer` does not, so we can’t install with just Windows. You can try to use WSL to do the install, but it always gave me issues. The Linux system needs to be Rhel 8+/Fedora/Rocky 8+ or Ubuntu 20.10+ because of the requirement for Podman.

As mentioned, DHCP + DNS are very important for OpenShift. We need to plan what our cluster DOMAIN and CLUSTER NAME will be. For this I will use “cluster1” as the cluster, and “example.com” as the domain. Our example IP will be 192.168.2.10 for our node. When I put a $ at the start of a line, that is a terminal command.

- First, we will setup DNS, that is a big requirement for OpenShift, to do that you need a static IP address. Give the system a reservation or static IP address for your environment.

- Now go and make the following addresses point to that IP, because we are on a single node, these can all point to one IP. Note this is for SNO, for larger clusters you need different hosts and VIPs for these IPs.

- api.cluster1.example.com -> 192.168.2.10

- api-int.cluster1.example.com -> 192.168.2.10

- *.apps.cluster1.example.com -> 192.168.2.10

- The two api addresses are used for K8s API calls, *.apps is a wildcard where all the sub apps within the cluster will be accessed. These applications use the referrer url of the web request to figure out where the traffic should go, thus everything has to be done via DNS name and not IP.

- Note: The wildcard for the last entry is needed for some services to work, you can individually add them, but it becomes a lot of work. Wildcards cannot be used in hosts file, which means you do need proper DNS. There is a footnote for below, for all the DNS entries you may if you want to run out of a hosts file.

- Go to Download Red Hat Openshift | Red Hat Developer.

- Sign up for a Red Hat Developer account and click “Deploy in your datacenter”.

- Click “Run Agent-based Installer locally”.

- Download the OpenShift installer, your “pull secret”, and a command line tool.

- You can also download the installer and clients from: https://mirror.openshift.com/pub/openshift-v4/clients/ocp/

- Open a terminal and make a “sno” folder wherever you want.

- Install Podman on your platform, if that’s Windows that means within WSL2, not on the Windows host.

- Copy/extract the openshift-installer, oc, and kubectl commands to that folder.

- $ export OCP_VERSION=latest-4.19

- $ export ARCH=x86_64

- $ export ISO_URL=$(./openshift-install coreos print-stream-json | grep location | grep $ARCH | grep iso | cut -d\” -f4)

- $ curl -L $ISO_URL -o rhcos-live-fresh.iso

- I used “rhcos-live-fresh.iso” for the clean ISO, then copied it every time I needed to start over, I found this easier than redownloading.

- $ cp rhcos-live-fresh.iso rhcos-live.iso

- Create a text file called “install-config.yaml”, copy the following and edit for your setup:

apiVersion: v1baseDomain: example.com

compute:

- name: worker

replicas: 0

controlPlane:

name: master

replicas: 1

metadata:

name: openshift

networking:

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

machineNetwork:

- cidr: 192.168.2.0/24

networkType: OVNKubernetes

serviceNetwork:

- 172.30.0.0/16

platform:

none: {}

bootstrapInPlace:

installationDisk: /dev/nvme0n1

pullSecret: '{"auths":{"cloud.openshift.com":{"auth":"b3BllBFa…0M4NjNSaEo0RmNXZw==","email":"danisawesome@example.com"}}}'

sshKey: |

ssh-rsa AAAAB3QQe/… /h3Pss= dan@home

Note: I have removed most of my pull secret, and ssh key

- baseDomain: This is your main domain

- clusterNetwork: The internal network used by the system, DO NOT TOUCH

- machineNetwork: Network your system will have a NIC on, change this to your network

- serviceNetwork: Another internally used network, DO NOT TOUCH

- installationDisk: The disk to install to

- pullSecret: Insert that secret downloaded from Red Hat in Step 6

- sshKey: The public key to your local accounts ssh key, this will be used for auth later

- $ mkdir ocp

- $ cp install-config.yaml ocp

- $ ./openshift-install –dir=ocp create single-node-ignition-config

- Optional to operate off a single disk

- ./add_parition_rule.sh ./ocp/bootstrap-in-place-for-live-iso.ign ./ocp/bootstrap-in-place-for-live-iso-edited.ign

- $ alias coreos-installer=’podman run –privileged –pull always –rm -v /dev:/dev -v /run/udev:/run/udev -v $PWD:/data -w /data quay.io/coreos/coreos-installer:release’

- $ coreos-installer iso ignition embed -fi ocp/bootstrap-in-place-for-live-iso.ign rhcos-live.iso

- Boot rhcos-live.iso on your computer, it will take 20 or more minutes, then the system should reboot

- If everything works, the system will reboot, then after 10 or so minutes of the system loading pods, https://console-openshift-console.apps.cluster1.example.com/ should load from your client computer. The login will be stored on your sno/ocp/auth folder.

Many caveats here: if your install fails to progress, you can ssh in with the SSH key you set in the install-config.yaml file. That is the only way to get in. Check journalctl to see if there are issues. It’s probably DNS. You can put the host names above into the hosts file of the installer and then after reboot the host itself to boot without needing DNS.

You CAN build an x86_64 image using an ARM Mac. You can also create an ARM OpenShift installer to run on a VM on a Mac. The steps are very similar for an ARM Mac except they have aarch64 binaries at: mirror.openshift.com/pub/openshift-v4/aarch64/clients/ocp/latest-4.18/, and you use “export ARCH=aarch64”. Be careful on an ARM Mac about using the x86_64 installer for targeting an x86_64 server, and a aarch64 installer for ARM VMs. Or you will get “ERROR: release image arch amd64 does not match host arch arm64” and have to go to ERROR: release image arch amd64 does not match host arch arm64 – Simon Krenger to find out why.

Hopefully this helps someone, I think OpenShift and OKD could be helpful for a lot of people looking for a hypervisor, but the docs and getting started materials are hard to wrap your head around. I plan to make a series of posts to help people get going. Feel free to drop a comment if this helps, or something isn’t clear.

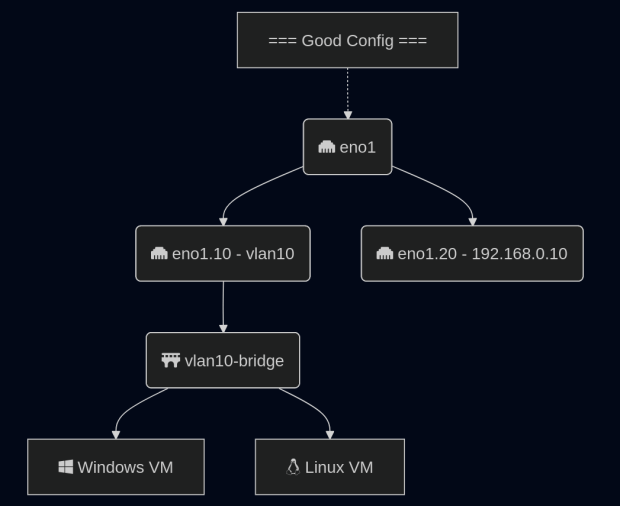

The series continues in: Step-By-Step Getting Started with High Availability OpenShift 4.19 for a Homelab, Step-By-Step Setting Up Networking for Virtualization on OpenShift 4.19 for a Homelab, Step-By-Step Setting Up Local LVM Storage for Virtualization on OpenShift 4.19 for a Homelab

DNS SNO Troubles

This section is optional, and for those who would like to run without external DNS for a stack. It can lead to the stack being odd, if you dont need this, you may not want to do it. All this was tested on 4.19.17.

The issue you run into here, is the fact that the way DNS works in OpenShift is pods are given CoreDNS entries, and they are given a copy of your hosts resolv.conf. In the event you want to start an OpenShift system completely air-gapped, with no external DNS, you need the entries we stated in other articles, mainly: api.<cluster>.<domain>, api-int.<cluster>.<domain>, *.apps.<cluster>.<domain>, master0.<cluster>.<domain>. Wildcard lookups cannot be in a hosts file. Luckily, because of this, OpenShift ships with dnsmasq installed on all the hosts.

Our flow for DNS will be: the host itself runs dnsmasq, and points to itself for DNS. It has to point to itself on its public IP because that resolv.conf file will be baked into pods; if you put 127.0.0.1 then pods will get that and fail to hit DNS. Then dnsmasq points to your external DNS servers. That way, all lookups hit dnsmasq first, then can be filtered to the outside.

When installing OpenShift: there is the install environment itself, then the OS after reboot, we need these entries to be in both environments.

I have created a script, it is used like the partition script I used in the SNO post. To use it, create your ignition files with openshift-install, then $ ./add_dns_settings.sh ./ocp/bootstrap-in-place-for-live-iso.ign ./ocp/bootstrap-in-place-for-live-iso-edited.ign and install with that edited ignition file.

This allows you to set all the settings you need, and a static IP setting for the host that will run single node. When installing this way, you will need to add some hosts file entries to your client because outside the cluster the DNS entries dont exist. The new SNO system is not in external DNS and that is how OpenShift routes traffic internally. Adding the below line to your clients hosts file with cluster and domain changed should be enough to connect:

192.168.1.10 console-openshift-console.apps.<cluster>.<domain> oauth-openshift.apps.<cluster>.<domain>

Backstory About Why OpenShift

After all the recent price hikes by Broadcom for VMware, my work – like many – have been looking for alternatives. Not only do high hypervisor costs make it expensive for your existing clusters, it makes it hard to grow clusters with that high cost. We already run a lot of Kubernetes and wanted a new system that we could slot in, allowing for K8s and VMs to run side by side (without paying thousands and thousands per node that Broadcom wants). I was tasked with looking at alternatives out there, we were already planning on going with OpenShift as our dev team had started using it, but it doesn’t hurt to see what else is out there. The requirements were: had to be on-prem, be able to segment data by vlan, run VMs with no outside connectivity (more on that later), and have shared storage. There were more but those were the general guidelines. For testing the first thing I installed was Single Node OpenShift (SNO), and that’s what I will start going over here. It does do the job decently well enough, but the ramp up is rough. Gone are the VMware nice installers, and welcome to writing YAML files.

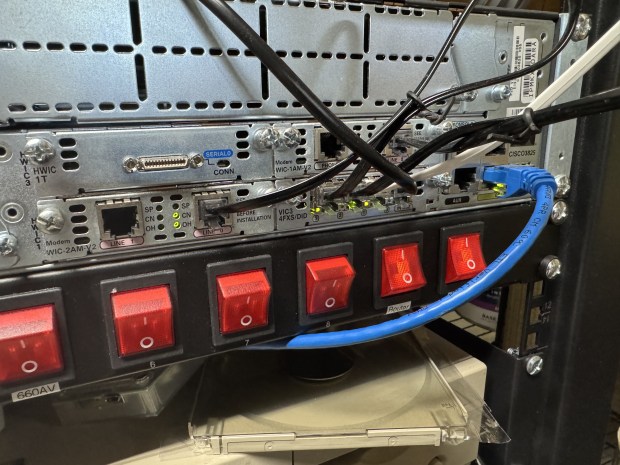

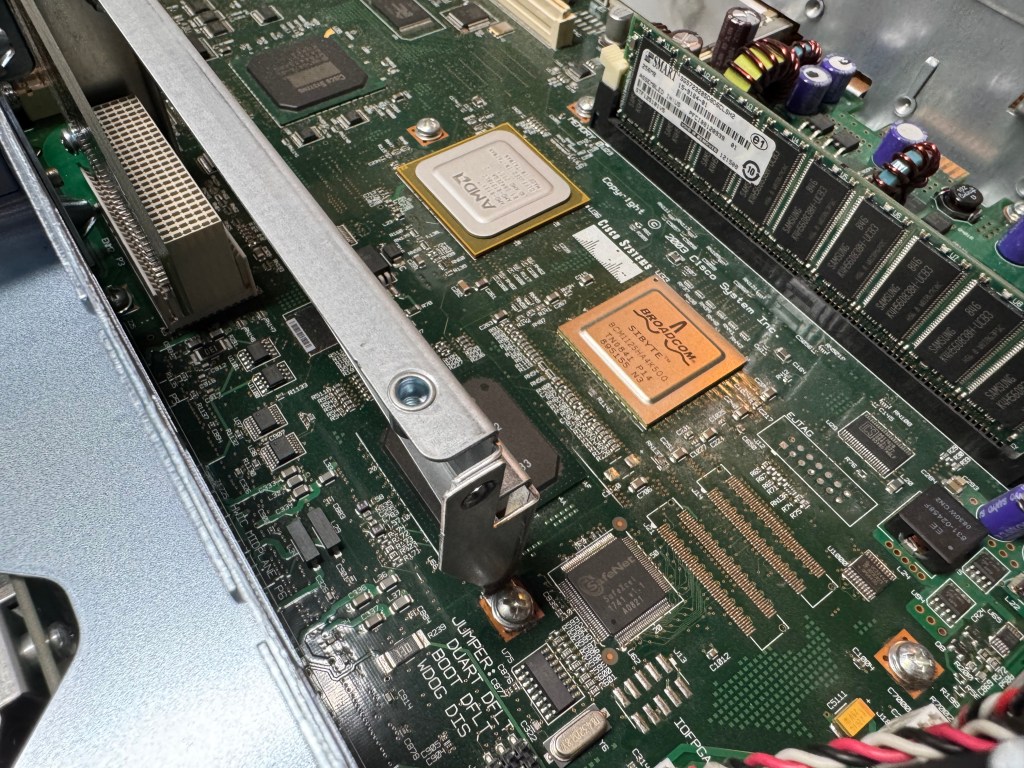

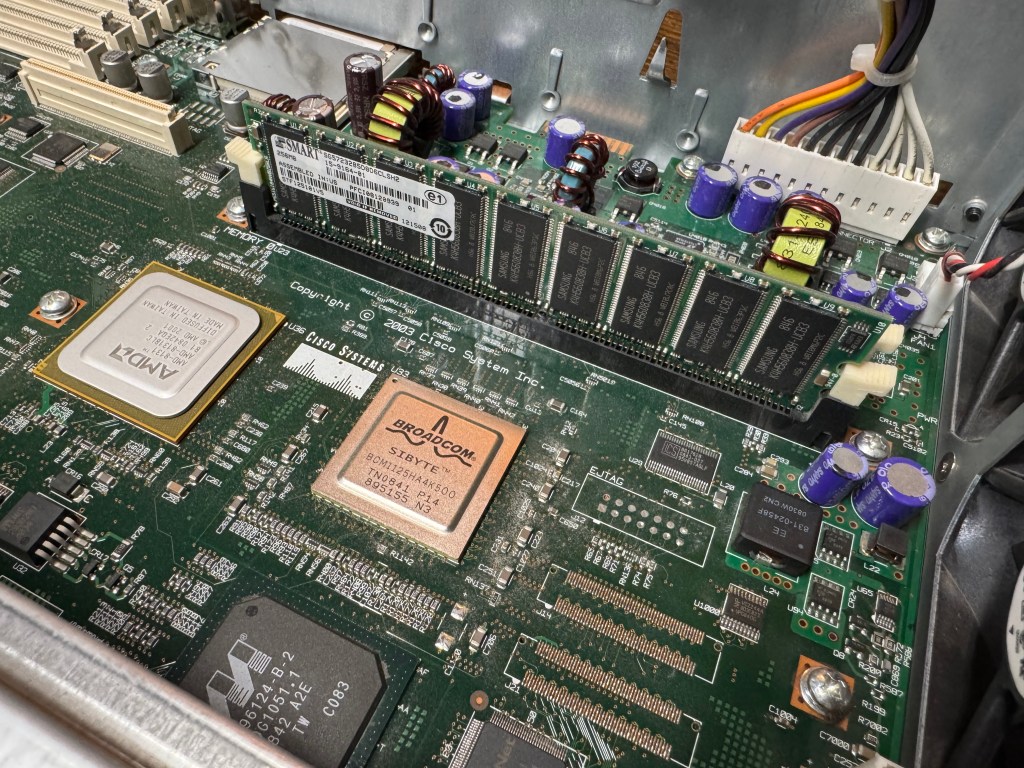

The big other players were systems like Hyper-V, Nutanix, Proxmox, Xen Orchestra, KVM. We are not a big Microsoft shop and a lot of our devs had a bad experience with Hyper-V, so we scratched that one. Also, Hyper-V doesn’t seem all that loved by Microsoft for on-prem, so that turned us away. I investigated Nutanix but they have a specific group of hardware they want to work with, and a very specific disk configuration where each server needs 3 + SSDs to run the base install. I did not want to deal with that, so we moved on before even piloting it. Proxmox is a community favorite, but we didn’t want to use that for production networks, and thought getting it passed security teams at our customers would be difficult. Xen Orchestra is getting better but in testing had some rough spots and getting the cluster manager going gave some difficulty. This left raw KVM, and that was a non-starter because we want users to easily be able to manage the cluster.

Without finding a great alternative, and the company already wanting to push forward on Red Hat OpenShift, I started diving into what it would take to get VMs to where we needed them to be. What I generally found is there is a working solution here, that Red Hat is quickly iterating on. It is NOT 1:1 with VMware. You are running VMs within Pods in a K8s cluster. That means you get the flexibility of K8s and the ability to set things up how you want; along with the troubles and difficulties of it. Like Linux, the great thing about K8s is there are 1000 ways to do anything, that also is its greatest weakness.

Footnotes / Reading Materials

DNS Entries needed for normal use:

SNO on OCP-V – OpenShift Examples

Red Hat OpenShift Single Node – Assisted Installer – vMattroman

Fedora CoreOS VMware Install and Basic Ignition File Example – Virtualization Howto

butane/docs/config-openshift-v4_18.md at main · coreos/butane

Some useful information for networking: Deploying Single Node Openshift (SNO) on Bare Metal — Detailed Cookbook | by Reishit Kosef | Medium

Offline installs